This makes more sense than Tesla in Austin

By Gary S. Vasilash

While there is a lot of attention being paid to Tesla’s rollout of some 10 vehicles in Austin that are operating autonomously—with a safety driver on board and apparently people back in Tesla HQ monitoring the fleet, ready to kick in with teleoperation help if needed—there is virtually no attention being paid to what is happening in Cambridge, UK.

This week an autonomous Mellor Orion E electric midi bus—a low-floor transporter that can be configured to accommodate a maximum 16 passengers—equipped with a CAVStar Automated Drive System started rolling through the streets of the university town.

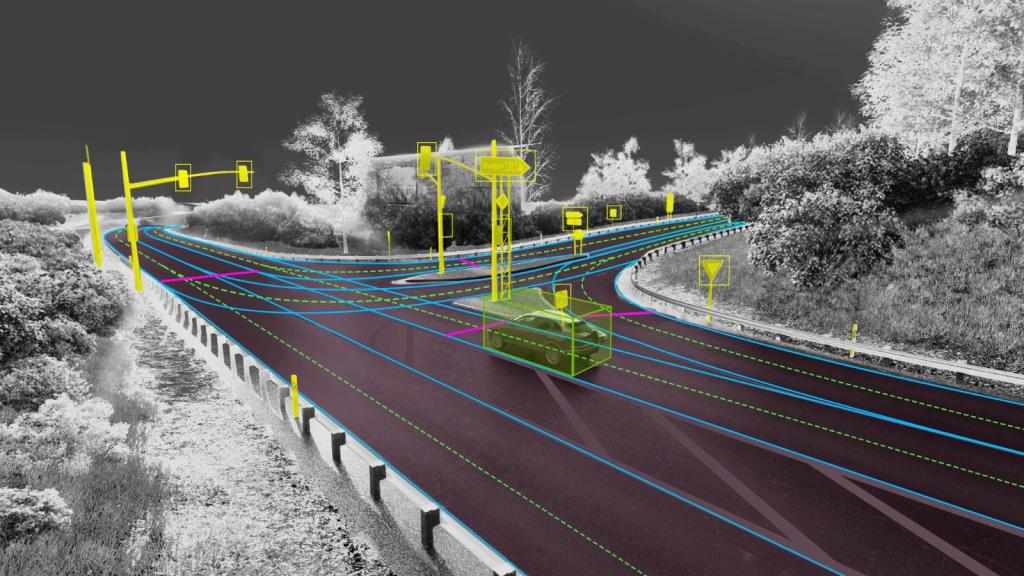

The CAVStar system is engineered by Fusion Processing. It comprises an AI processing unit, radar, LIDAR, optical cameras, and ultrasonic sensors.

The Cambridge vehicle is said to meet the requirements of SAE Level 4 autonomy.

The bus is running as part of the Connector project, which is led by the Greater Cambridge Partnership, which is supported by Innovate UK and the Centre for Connected & Autonomous Vehicles.

The bus initially ran though the areas in Cambridge where it is now operating without passengers to determine fitness for use.

Dan Clarke, head of Innovation and Technology at the Greater Cambridge Partnership:

“People may have already seen the bus going around Eddington and Cambridge West from Madingley Park & Ride recently, as, after the extensive on-track training with the drivers, we’ve been running the bus on the road without passengers to learn more about how other road-users people interact with the technology. We’re now moving gradually to the next stage of this trial by inviting passengers to use Connector.

“As with all new things, our aim is to introduce this new technology in a phased way that balances the trialling of these new systems with safety and the passenger experience. This will ensure we can learn more about this technology and showcase the potential for self-driving vehicles to support sustainable, reliable public transport across Cambridge.”

Somehow this seems more substantial than the reports out of Austin about the performance of some of those Tesla vehicles.

In addition to which: if, as Musk has proclaimed in his various “Master Plans,” his goal is to reduce overall energy use (yes, targeting fossil fuels, but even renewable energy systems are far from being zero-emissions), then doesn’t a mass transit vehicle that can transport plenty of people make more sense than autonomous passenger cars?